Overview

As artificial intelligence continues to reshape digital content creation, governments and regulators worldwide are introducing stricter frameworks to control the misuse of synthetic media. In 2026, the focus has clearly shifted from banning AI technologies to enforcing transparency, accountability, and rapid enforcement.

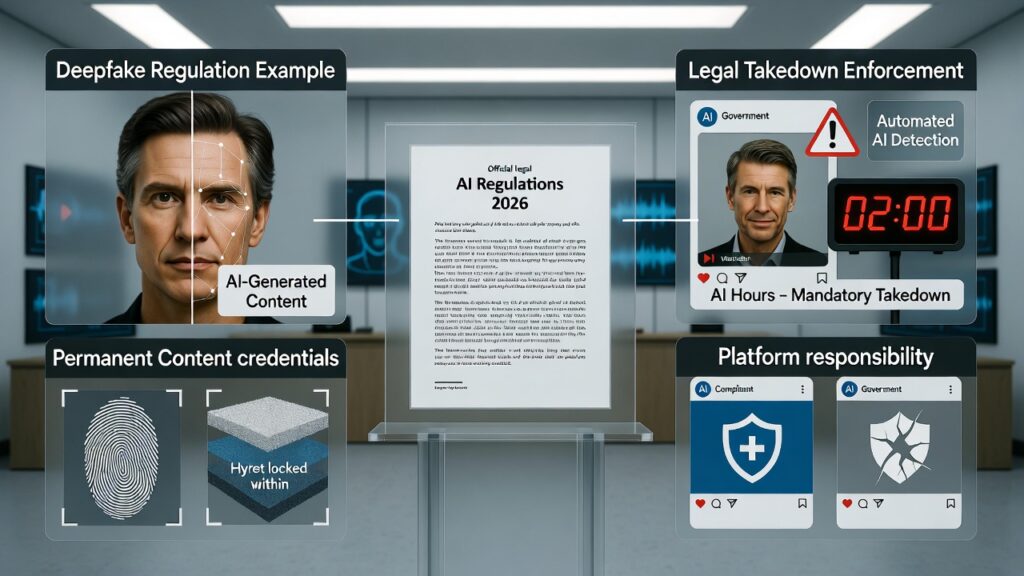

New AI regulations now emphasize mandatory labeling of AI-generated content, permanent content credentials, accelerated takedown timelines for harmful material, and increased legal responsibility for online platforms.

Why AI Regulation Is Tightening in 2026

The rapid growth of generative AI has made it easier to create realistic images, videos, and audio that can mislead users. Authorities have raised concerns over:

- Deepfakes impersonating real individuals

- Non-consensual AI-generated content

- Election-related misinformation

- Financial fraud and identity abuse

- Removal of AI disclaimers after content sharing

To address these risks, regulators are prioritizing early identification and traceability of AI content, rather than post-damage action.

Key AI Regulation Changes Emerging in 2026

1. Mandatory AI Labeling for Synthetic Content

3Under new regulatory expectations, synthetically generated information (SGI) must be clearly disclosed. This includes:

- AI-generated images and videos

- Synthetic or cloned audio

- AI-altered visuals presented as real

The goal is to ensure users can immediately identify whether content is artificial, reducing the risk of deception.

2. Permanent Metadata and Digital Content Credentials

One of the most significant changes involves embedding persistent metadata, sometimes referred to as digital content credentials or digital DNA, into AI-generated media.

These identifiers are designed to:

- Stay attached to the content across platforms

- Prevent removal of AI disclosures

- Enable detection even after edits or reuploads

This directly addresses the problem of AI labels being stripped once content spreads online.

3. Faster Takedown Timelines for Illegal AI Content

Regulatory proposals now require platforms to respond within strict timeframes once official orders are issued:

- Up to 3 hours for illegal AI content after a valid government or court order

- As little as 2 hours for non-consensual deepfakes or intimate synthetic media

These timelines reflect the speed at which harmful AI content can go viral.

4. Increased Platform Liability and Safe Harbour Risk

Online platforms traditionally benefit from safe harbour protections, shielding them from liability for user-generated content. However, regulators have clarified that this protection depends on active compliance.

Failure to:

- Enforce AI labeling

- Act quickly on takedown orders

- Deploy reasonable detection systems

may result in platforms losing safe harbour immunity, exposing them to legal consequences under national IT laws.

5. Self-Declaration and Automated Detection Requirements

Platforms are now expected to adopt a dual-layer approach:

- User self-declaration: Uploaders may be required to disclose whether content is AI-generated or AI-assisted.

- Automated detection: Platforms must deploy tools to identify undeclared synthetic content and apply labels or restrictions automatically.

This reduces reliance on user honesty alone.

Global Context: California’s AI Transparency Law

Similar measures are being adopted internationally. In the United States, California’s AI Transparency Act (SB 942) comes into force on January 1, 2026.

The law requires:

- AI providers to embed content credentials by default

- Disclosure tools to be made available

- Clear identification of AI-generated media

This legislation is widely viewed as a global reference point for future AI governance.

What This Means for Publishers, Creators, and Businesses

- AI-generated content remains legal and permitted

- Transparency is now mandatory, not optional

- Content traceability is becoming a standard requirement

- Platforms face real compliance and liability risks

- Early adoption of disclosure practices improves trust and monetization safety

For news publishers and businesses, proper AI disclosure is increasingly seen as a best practice rather than a limitation.

Conclusion

The regulatory direction for 2026 is clear. Governments are not restricting AI innovation, but they are demanding responsibility in how synthetic media is created, shared, and moderated.

By enforcing mandatory labeling, permanent content credentials, faster takedowns, and platform accountability, regulators aim to reduce harm while allowing AI technologies to continue evolving.

AI is allowed. Transparency is mandatory. Accountability is unavoidable.