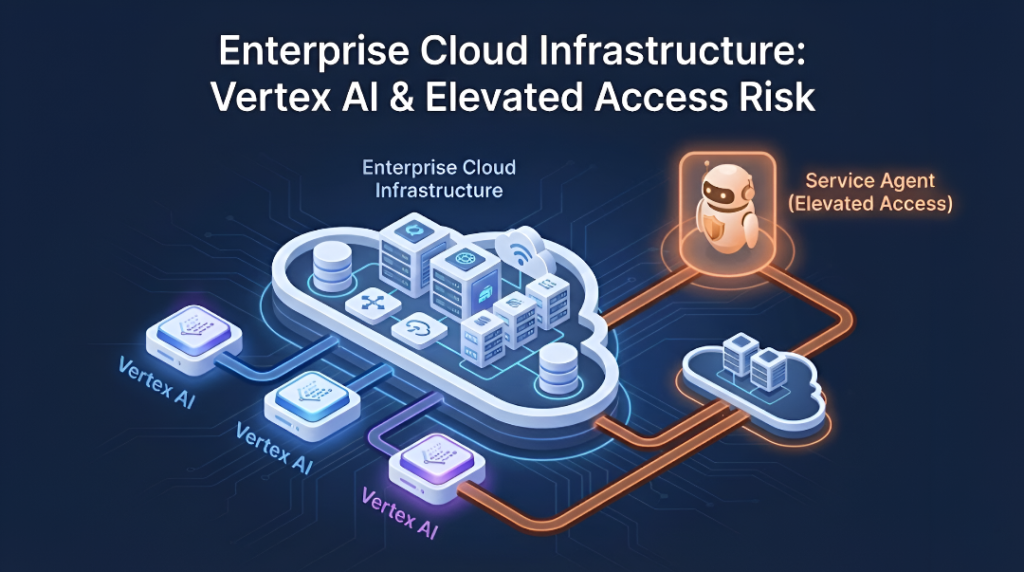

Security researchers have reported a security risk in Google Vertex AI related to its default configuration. The issue allows users with low or read-level permissions to indirectly obtain high-privilege Service Agent access, which can impact enterprise cloud environments.

The findings were disclosed by XM Cyber researchers and later reviewed by Google, which stated that the behavior aligns with the current design model. Researchers, however, demonstrated that this design can lead to real-world privilege escalation scenarios.

Overview of the Issue

Vertex AI uses Service Agents, which are Google-managed identities attached automatically to AI components for internal operations.

These Service Agents are granted broad project-level permissions by default.

Researchers observed that when access to these Service Agents is indirectly exposed, users with limited permissions may be able to perform actions beyond their assigned role, without modifying IAM policies.

Affected Vertex AI Components

The risk was confirmed in two Vertex AI components:

- Vertex AI Agent Engine

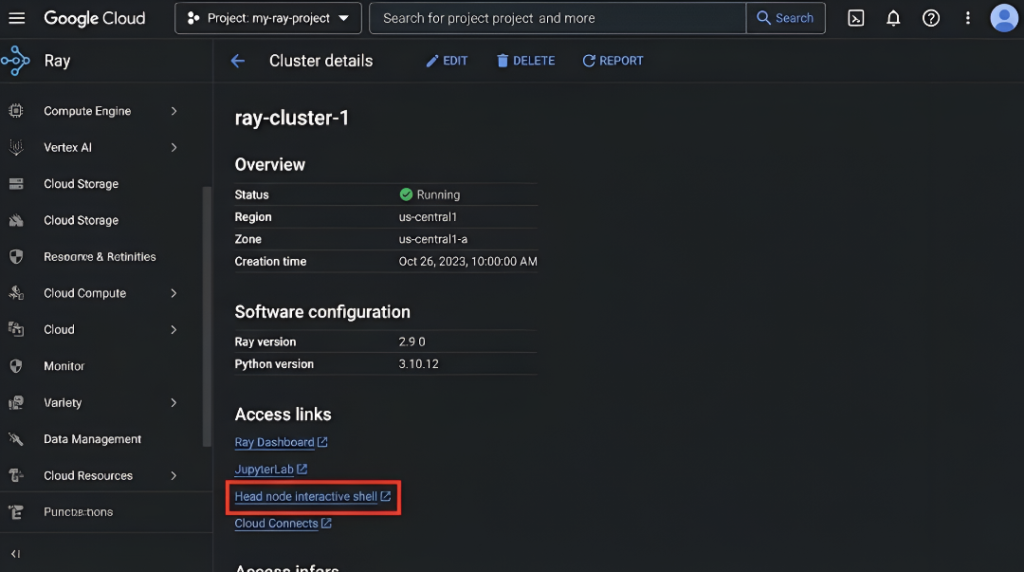

- Ray on Vertex AI

In both cases, the issue is linked to permission inheritance and default trust relationships, not to malware, zero-day exploits, or software bugs.

Observed Impact (Validated)

According to XM Cyber’s analysis, the default behavior can result in:

- Access to AI agent memory and session data

- Visibility into logging and operational data

- Read and write access to Cloud Storage resources

- Read and write access to BigQuery datasets (in Ray-based environments)

These outcomes were observed starting from read-level permissions, making the escalation risk significant for enterprise deployments.

Summary of Findings

| Component | Service Agent Involved | Initial Access Level | Observed Risk |

|---|---|---|---|

| Vertex AI Agent Engine | Reasoning Engine Service Agent | Limited update access | Exposure of AI data and storage |

| Ray on Vertex AI | Custom Code Service Agent | Viewer-level access | Elevated access to storage and analytics |

Why This Matters

Vertex AI is widely used for production AI workloads, where systems often process sensitive business data.

When Service Agents hold extensive permissions by default, any indirect exposure increases the attack surface and expands the potential impact across a cloud project.

Security researchers describe this as a confused-deputy risk, where trusted system components unintentionally perform actions on behalf of low-privileged users.

Current Status

- The issue was responsibly disclosed to Google

- Google reviewed the findings and classified them as design-related

- No changes to default Service Agent permissions have been announced

- Researchers advise enterprises to treat default Vertex AI configurations as risk-bearing, not secure baselines